Lupine Publishers Group

Lupine Publishers

Research Article(ISSN: 2644-1306)

Clinical Image Analysis for Detection of Skin Cancer Using Convolution Neural Networks Volume 1 - Issue 3

Anand Pandey, Aman Sharma and S P Syed Ibrahim*

- School of Computing Sciences and Engineering, VIT Chennai Campus, India

Received: April 29, 2019 Published: May 06, 2019

*Corresponding author: SP Syed Ibrahim, School of Computing Sciences and Engineering, VIT Chennai Campus, India

DOI: 10.32474/TRSD.2019.01.000111

Abstract

Humankind, since the growth of its civilization past the industrial revolution, has been a witness to an exponential rise in the number of causes and fatalities of unprecedented deaths. Despite having a humongous growth of technology since the late 80’s, researchers and doctors have not been able to fully develop cutting-edge technology that could play a crucial role in reducing life-threatening cases of diseases around the world. Diagnosis of the disease requires a high level of precision and expertise in a variety of visual aspects. Technology in the field of Medical Science has been successful in achieving unprecedented heights and has played a major role ranging from medical assistance to automation in surgeries. Machine Learning technologies have dominated by having a positive endeavor towards detecting life-long diseases at an early stage. Deep Learning has played an important role in this domain to give doctors and research scholars unimaginable assistance in predicting cancerous cases. This paper suggests an implementation of a CNN model being malignant or benign. Since the dataset was quite imbalanced, the proposed method applies Gaussian filtering over the sample images and then processed it. We have proposed an architecture named “Adnet” that has a particular recursive flow of layer networks which provides better accuracy than the pre-existing models Figure 1.

Keywords: Cancer Classification; Deep Learning; CNN; Inception; VGG

Introduction

Deep learning is revolutionizing the medical field by helping the researchers to analyze the images and data that early were not possible. A voluminous amount of data consisting of X-ray images, doctor’s report and medical images help the researchers to analyze it to provide valuable insights on the disease/problem. Deep Learning solutions are giving great result in areas such as image analysis, object detection, image classification, etc. Skin cancer, a deadly disease results from the uncontrolled growth of abnormal behaving skin cells. It generally occurs when unrepaired DNA damages skin cells mutations triggering mutations or genetic defects which later lead to multiplication and formation of malignant tumors. The detection of skin cancer is a challenging task and many methods have been proposed to help in the early detection of cancer so that medical assistance is provided. Several algorithms and solutions have been proposed that can help to detect skin cancer through the image patches [1] used the concept of deep features in the convolution neural network to detect the class of cancer from 10 class cancer dataset [2] proposed architecture for automated basal cell carcinoma detection [2]. Deep Learning can help to learn a set of high dimensional features without the need of mechanically extracting them. This can help us in increasing the accuracy of the results and model the learned features layer by layer. There has been an advancement in the skin cancer detection process in recent years. Many authors have successfully used CNN for learning the feature and obtaining the result. For instance, Ammara Masood et al. presented a paper which focuses on the semi-supervised techniques on the melanoma classification [3].

This paper utilizes the advantage of the deep learning method and suggests an implementation of Convolutional Neural Network (CNN) models to predict skin cancer moles being malignant or benign. Experimental results show that models like Inception and VGG and various others models are tweaked and developed in a manner that provides not only a great accuracy but also some good insights on specificity and the sensitivity of various healthcare models. Also, since the dataset was imbalanced instead of performing standard techniques of oversampling or undersampling, we tried to solve the problem using Gaussian filtering. This is because imbalances in the dataset can lead to overfitting or the underfitting of the dataset which is disastrous for medical- disease related predictions.

Related Work

Automatic decision support helps the doctors to identify the disease early. An automatic classification system was proposed by [4] consisting of preprocessing, segmentation of images and feature extractions. A two-stage classifier has also been used that can help to reduce the miss rate of melanoma. A lot of image analyticsbased methods have been developed to detect early-mid stages of various types of cancer. [5] uses image processing for the successful evaluation of the skin lesions. This model also uses patient history before the actual diagnosis. Development of pigmented skin lesions border detection methodology which deals in macroscopic images [6]. have proposed methods which are built on the inverse diffusion equation, a form of non-linear diffusion. A paper published by [7] gives us a demonstration to a developed supervised method that exploits the color space of an image where healthy skin color is identified by a rough outer region of the skin sample image. Then this border is shrunk until the color of the inner region of the skin is almost similar to the outer region of the skin.

[8] have proposed a fully functional library that comes preinstalled in a handheld device that shows the segmentation and the classification of images to achieve high speeds of execution in comparison to a remote PC or a remote server. Most of the systems are developed on the baseline of detecting changes in the skin lesion classification techniques where comparisons were made in the determining which set of features of a convoluted image are more discriminative. [9] proposed that colour features that often outperform the textual features and yield good results in terms of specificity and sensitivity [10]. The dermo scope or an epiluminescence microscope was first developed in the year 1987 that proved a diagnosis methodology of building an image model based on the concept of the incident light, oil immersion, and a magnifier [11]. However, the accuracy of the setup was largely varied as to the experience of the physician handling it. [12] discovered a process as tissue counter analysis which was stated on the partition of the images into minute square elements where features of each square element. It will be quite easy that Convolutional Neural Network which works on the similar could have been inspired by it. The images were able to throw out features that allowed the differentiation of homogenous and high contrast tissue areas [13]. Some of the methods that could classify images with less latency and improved accuracy had an intensive of Artificial Neural Network classifiers with the algorithm of the k-Nearest Neighbors. Recently deep learning plays an import role in disease detection. This paper proposed a deep learning based system to detect skin cancer early [14]. The following section deals with the proposed work.

Proposed Work

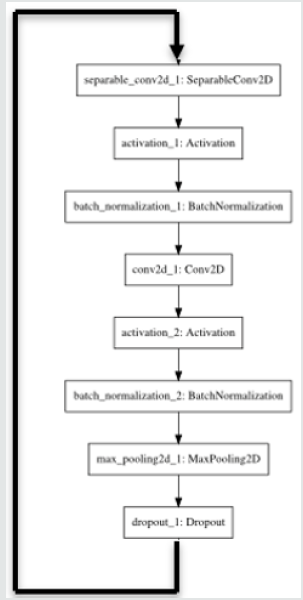

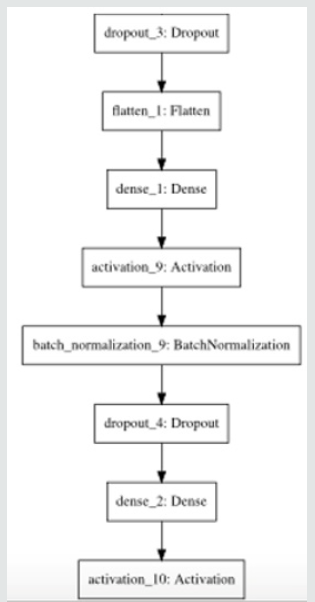

The proposed work consists of two steps. in Figure 2 First is the pre-processing of image and the second one is the building of the CNN model for the training and evaluation. This is the first tier of architecture which works in a similar manner as to that of a back propagating neural network model. This model continues to backpropagate for about 9 times in the architecture. The final segment of architecture consists of a dense function is used which is a fully connected layer. Finally, the softmax activation function is used to bifurcate predicting class labels into malignant or benign. The paper applies Gaussian filtering to images to extract the important features from the dataset of images and then develops a numpy library array of the image data to process the images for prediction using convolutional networks. The dataset is then partitioned into training and testing dataset in the ratio of 75:25 and the training and the testing class labels are converted in categorical format consisting of two classes (malignant and benign). The augmentation of the training set then generates the image in the form of convoluted matrix with the help of an Adam optimizer. Now a confusion matrix is created between the predicted and the original test class labels. And then they are plotted using the library ggplot [15].

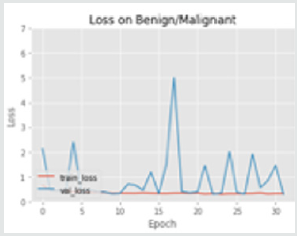

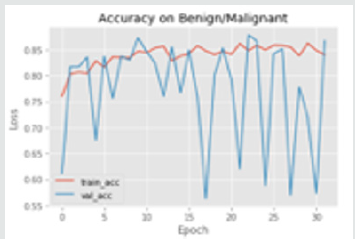

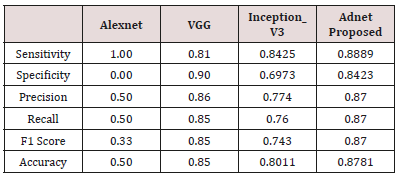

The results of the training versus testing loss and the accuracy of the model has been plotted against the number of epochs in the graphs below. The model is evaluated quantitatively used three commonly used matrix. The three metrics are: sensitivity, specificity, and accuracy. These parameters were calculated from the confusion matrix consisting of True Positive, True Negative, False Negative, False Positive cases. Here True Positive rate is the classification of the benign class samples correctly Figure 3. False Positive rate is the classification of the melanoma class samples correctly. False Positive/Negative is rate is the wrong classification of either of the class labels. These different metrics have been used for evaluation and different convolutional architectures have been used and compared to compare the accuracy and effectiveness of the proposed model in comparison to the pre-existing, widely used techniques Figure 4. The results of the evaluation are given in the Table 1.

Conclusion and Future Work

This paper has given an effective on detecting whether the skin mole is prone to cancer in the near future. However, the model might fail in giving good prospects in predicting the type of cancer through the skin moles. This paper is totally focused on predicting moles which are of melanoma category. Given a situation of detecting augmented images of skin lesions, this method might be disastrous. Further, the implementation model of the given architectures might give images in a false category because of the lack of enough amount of images used as a dataset. In order to produce a foolproof neural network that is capable of predicting any type of skin mole, we need to find out methods and techniques with models that have less importance and variance of results on dropping out of neurons after the convolutional layers. Models that are capable of recalibrating themselves according to the importance of certain neuron layers present in the network. One more crucial thing that could be added to the model is the confidence of the model given by the system. This type of confidence-building model would be taking the input from dermatology experts so that higher the correct results the model yields the higher is its confidence levels.

References

- Kawahara, Jeremy, Aicha BenTaieb, Ghassan Hamarneh (2016) Deep features to classify skin lesions. 2016 IEEE 13th International Symposium on Biomedical Imaging (ISBI), Canada.

- Cruz-Roa, Angel Alfonso (2013) A deep learning architecture for image representation, visual interpretability and automated basal-cell carcinoma cancer detection. International Conference on Medical Image Computing and Computer-Assisted Intervention. Berlin, Heidelberg.

- Masood, Ammara, Adel Al-Jumaily, Khairul Anam (2015) Self-supervised learning model for skin cancer diagnosis. 2015 7th International IEEE/EMBS Conference on Neural Engineering (NER).

- Cavalcanti, Pablo G, Jacob Scharcanski, Gladimir VG Baranoski (2013) A two-stage approach for discriminating melanocytic skin lesions using standard cameras. Expert Systems with Applications40(10): 4054-4064.

- Jose Fernandez Alcon, Calina Ciuhu, Warner ten Kate, Adrienne Heinrich, Natallia Uzunbajakava, et al. (2009) Automated Imaging System With Decision Support for Inspection of Pigmented Skin Lesions and Melanoma Diagnosis. IEEE Journal Of Selected Topics In Signal Processing 3(1): 14-25.

- Gao J, Zhang J, Fleming M G, Pollak I, Cognetta A B (1998) Segmentation of Dermatoscopic Images by Stabilized Inverse Diffusion Equations. Proc. of the 1998 International Conference on Image Processing 3: 823-827.

- Haeghen YV, Naeyaert JM, Lemahieu I (2000) Development of a Dermatological Workstation: Preliminary Results on Lesion Segmentation in CIELAB Color Space. Proc. of the First Int. Conf. on Color in Graphics and Image Processing.

- T Wadhawan, N Situ, K Lancaster, X Yuan, G Zouridakis (2011) Skin Scan: A portable library for melanoma detection on handheld devices. in Biomedical Imaging: From Nano to Macro, 2011 IEEE International Symposium on, pp: 133-136.

- Barata, Catarina, M Emre Celebi, Jorge S Marques (2015) Improving dermoscopy image classification using color constancy. IEEE journal of biomedical and health informatics19(3): 1146-1152.

- Sheha Mariam A, Mai S Mabrouk, Amr Sharawy (2012) Automatic detection of melanoma skin cancer using texture analysis. International Journal of Computer Applications 42(20): 22-26.

- Abuzaghleh, Omar, Buket D Barkana, Miad Faezipour (2014) Automated skin lesion analysis based on color and shape geometry feature set for melanoma early detection and prevention. IEEE Long Island Systems, Applications and Technology (LISAT) Conference.

- Wiltgen M, Gerger A, Wagner C, Smolle J (2008) Automatic identification of diagnostic significant regions in confocal laser scanning microscopy of melanocytic skin tumors. Methods of Information in Medicine 47(1): 14-25.

- Wang Edward J, Li W, Junyi Zhu, Rana R, Patel SN (2017) Noninvasive hemoglobin measurement using unmodified smartphone camera and white flash. 2017 39th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC). pp: 2333-2336.

- Raghave Prabhu, Understanding of convolutional neural network in CNN deep learning.

- Chollet, François (2017) Xception: Deep learning with depthwise separable convolutions. Proceedings of the IEEE conference on computer vision and pattern recognition.